Our Names Are On The Top

All of us are judged by what we share and send, even if a machine created it

Thanks in advance for reading and staying engaged with my newsletter. Occasionally I think about stopping but then each week I am glad I carve out the time to get things down and organized. Writing this newsletter feels like a cross between journaling, communicating, and marketing, and probably a few more things in there too.

I will often use Claude or ChatGPT for starting points for these emails, and then ultimately I wind up re-writing the whole thing. It’s worth it for me though because staring at a blank screen (or page) has always been a barrier for me. Correcting and criticizing work on the page is easy!

For me (and I’m guessing for many of you too) criticizing comes with with the obligation to make suggestions, improvements, or alternatives. Ultimately though, when any of us hit “send” “public” “post” “share” etc, we have made the decision to put our name on something and release it into the world as our own.

The flip side of “Don’t let the perfect be the enemy of the good”

For many people around the world, Generative AI is becoming a standard part of our process to work and create with a casualness that would have seemed remarkable just a few years ago. Tools like Claude and ChatGPT can now produce a social media post, a homework assignment, a project proposal, or a birthday message in seconds often with a fluency and polish that meets a standard of “very good.”

There are many of us that have long struggled with trying to be perfect in our communications. I have spent a lot of time crafting emails, choosing specific verbs, and fretting quite a bit about tone. There are benefits of course, but overall I have probably misspent a significant amount of time worrying about language that doesn’t much matter. With the level of sophistication in generative AI tools, the temptation to simply use what the machine creates without pausing is more understandable to me everyday. Many of us long ago adopted auto-fill, auto-correct, and parts (or even all) of the suggested email replies, because we can live with “very good” in so many cases.

In all of those scenarios though, we are putting our name in front of our work. We make assumptions, evaluations and judgements about each other by what comes after our names in person and online.

I do not see generative AI output as somehow separate from myself. The technologies are capable, but the all of us are more than delivery mechanisms. When your supervisor or client reads something from you, they are trusting your judgment, your knowledge, your understanding of the moment and the people that are involved. When your professor grades your essay, they are assessing your understanding, your intellectual growth, the particular way your mind has been shaped by the material. When a friend opens your birthday note, they are receiving something they believe came from your genuine attention and care. Generative AI can assist all of those things though it cannot substitute for your accountability to the people on the receiving end.

This is not a new idea. Ghostwriters have existed for centuries, research assistants have always done heavy lifting, and editors have long shaped final drafts into something the named author could stand behind. Chat GPT, Claude and others are extending and democratizing these kinds of support and benefits to writers and creators that in the past have not had access to all of this support.

Still, whatever help we use, the person whose name appears at the top is responsible for the whole of it. As we start operating at a different scale and speed, the responsibility of authorship is even more important now.

Putting my name on something is a guarantee that I stand behind this and I am willing to answer for it. That promise holds whether you typed every word yourself or whether you shaped a prompt, reviewed the output, edited for tone, and made the judgment that it was ready to share. The process looks different now than it did a generation ago, though the accountability at the center of it remains exactly the same.

My Own Guidelines

In my own writing and consulting work, I use AI tools regularly, finding them genuinely useful for thinking through ideas or getting unstuck when I’m staring at the screen.I’m not consistent on the front end of creating, but I try to be very consistent at the back end before sharing. I had to think about this to get it down, but the steps that I have internalized are:

Make sure I am comfortable explaining to my future self the what, why, and how for anything that comes after my name.

Consider what someone who has never met me would think if some of my work was forwarded are shared in any context

Reflect on how those closest to me react to what I share or create.

None of these three perspectives have a veto, but all are important for my concept of integrity.

Likewise, I will admit to being a little (and sometimes very) judgmental about emails, articles, social media posts, whatever content that feels like a person put very little of their own effort into. I recognize that others have goals with respect to writing and creating that are different than mine.

For example, someone who might have competing deadlines plus all kinds of personal obligations can feel crunched for time. Their goal is to try to keep all of the balls they are juggling in the air and it might be critical to a job or other obligation that they get something out even if it’s not their best work. When I understand the goal, I am much more willing to reserve judgment. The difference between using any tool well and using it dishonestly is what each of us is willing to own.

What’s your current practice when it comes to AI and attribution? I’d love to know where you’re drawing your own lines.

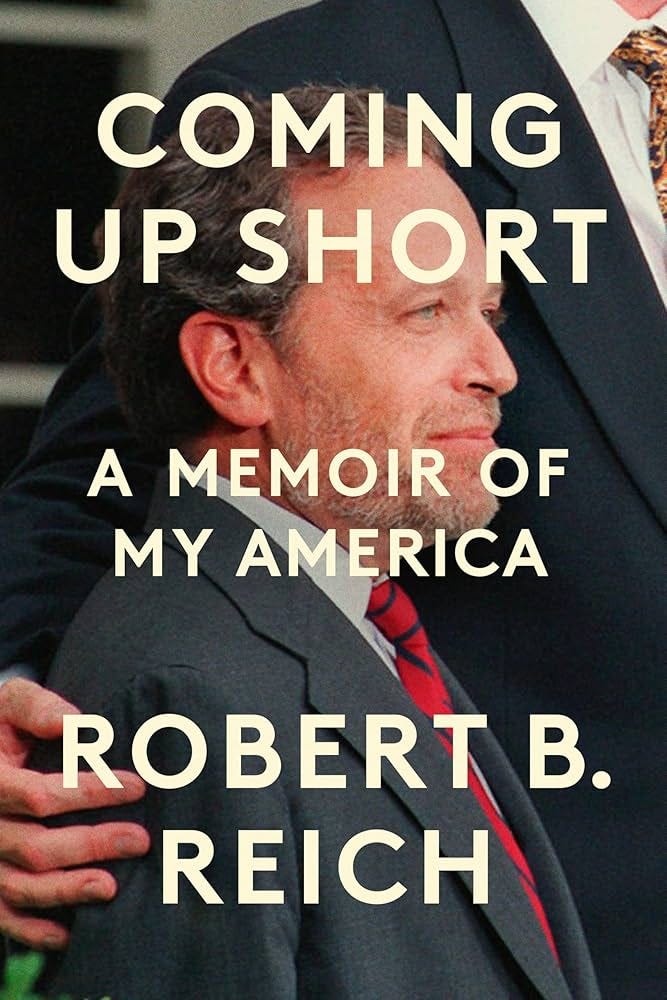

Expert of the week: Robert Reich

The former Secretary of Labor is one of my go-tos for passionate writing about the way things are and the way things could be. I turn to his writing when I am looking for a boost of justice, fairness, and a reminder about hope and decency.

Some of his posts are raging screeds, but it is clear that he believes in us and believes in a better future. His post from this week about the disconnect between the labor market, the stock market, and AI was interesting because he offers a set of policy ideas that don’t try to put “toothpaste back in the tube.” Namely, he advocates for:

(1) Break up monopolies through major antitrust enforcement.

(2) Regulate AI so corporations using it cannot engage in mass layoffs, but are only permitted to downsize by a small percentage (3 percent?) of their workforce per year.

(3) Establish Medicare for all.

(4) Institute a Universal Basic Income financed by higher taxes on those at the top, so all families have at least a subsistence wage.

These ideas may or may not be the right ones, but they at least advance the conversation beyond thinking of AI developments separate from the rest of how we live and work in the US and the world.